Richard Ogden and Marina Cantarutti

Prof. Richard Ogden (University of York) and Dr. Jürgen Trouvain (Saarland University) have been awarded an AHRC-DFG grant as Principal Investigators for the project: Brevit – Breathing behaviour and non-lexical vocalisations in talk-in-interaction. The team also features two full-time investigators, Dr. Marina Cantarutti (York) and Dr. Sascha Schaefer (Saarland).

The two-year project, funded by the Arts and Humanities Research Council (AHRC) and the German Research Foundation (DFG) will examine the role of breathing in non-lexical vocalisations in natural spoken interaction in English (as a first and second language), German and French.

The project builds on existing interactional and experimental research on breathing in interaction, and will focus on many underexamined sounds in conversation: audible in- and out-breaths, clicks, laughter, sighs and gasps. As we know from a rich body of research in Conversation Analysis (e.g. Keevallik & Ogden, 2020; Dingemanse, 2020; Robinson, 2023), these non-lexical vocalisations are common in conversation, and have crucial functions like regulating turn-taking, prefacing assessments, displaying affective stance; yet such sounds are rarely replicated in experimental data.

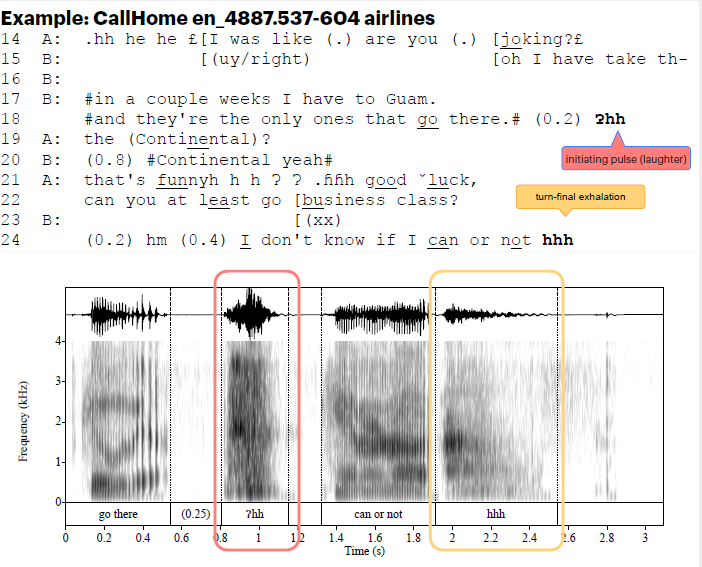

For example, exhalations in post-completion position are often found as initiating pulses in laughter (Ogden et al., 2025), or as turn-final, turn-projecting outbreaths (Local & Walker, 2012), as in the example below. We know these are different positionally and in terms of social action, but because they are often different also auditorily and acoustically, understanding the features and affordances of their production may give us valuable information as to how they are timed and coordinated with talk.

Currently, our knowledge of the phonetic form and positioning of non-lexical vocalisations and the link to breathing behaviour in spoken talk-in-interaction is still very limited:

- We understand rather little about their variability, or what role this variability plays in spoken interaction;

- We lack conversational data with high-quality audio, video and respiratory kinematic data that allows us to track breathing patterns;

- We need an interactionally-informed understanding of how breathing and speaking in conversation are intertwined.

By combining the methods of Conversation Analysis and Phonetics, we will achieve a more nuanced understanding of the variability we see in speech, using categories grounded in interaction that help us explain their role in turn-taking, turn-construction and the organisation of social actions, and seeing conversation as a joint, social achievement.

The project has four main aims:

- To investigate the relationship between breathing and affiliated vocalisations in natural spoken interaction in English (including as a second language), German and French.

- To identify and explain the variable forms and functions of breathing and affiliated sounds, such as audible breath noises, laughter, and tongue clicks.

- To investigate how such sounds contribute to the fine-grained timing, turn construction, and social organisation of conversation.

- To explore the emergent nature of turns at talk, marked by e.g. pauses, turn-taking cues, unfolding sentence structures, and signs of speech planning and ultimately visualise and model the core elements of this linguistic organisation.

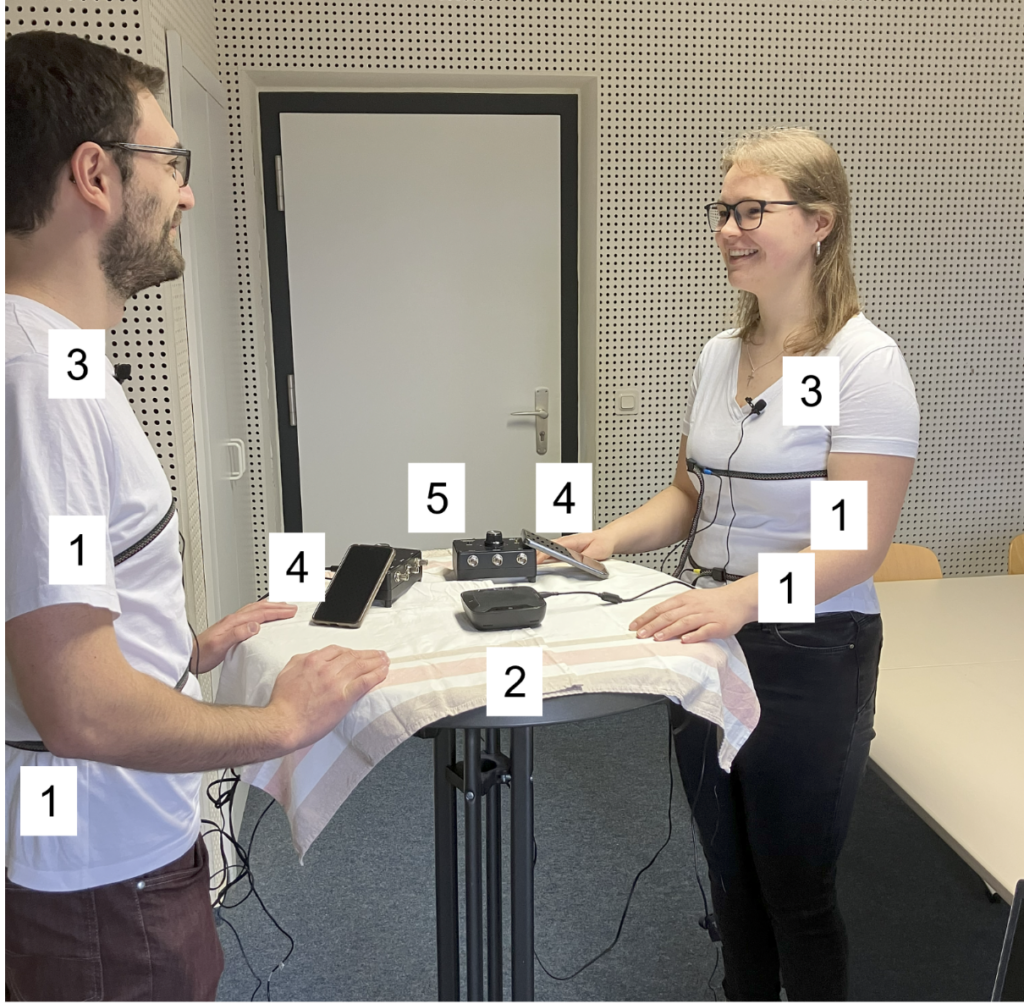

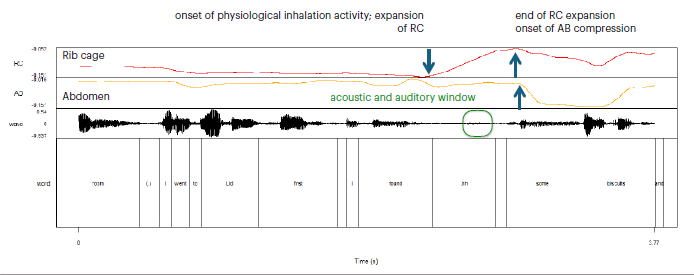

To achieve this, we will collect and analyse a novel dataset of high-quality conversational data, complete with synchronised audio, video, and non-invasive respiratory kinematic data using respiratory belts (RespTrack, see figures 1 and 2).

Figure 1. Mockup of two recording environments to collect nteractional data: session 1: lab recording (left); session 2: “living room interaction” (right). References: 1) inductive belts, 2) table microphone, 3) lapel microphones, 4) 360 camera, 5) console.

Figure 2. Output of the respiratory track signal, showing the timing of breathing preparation in the rib cage and abdomen (tracks 1 and 2), and on the waveform (track 3) an estimation of the window of potential perception of breathing sounds based on acoustic output.

The funding awarded will contribute to the teams in York and Saarbrücken holding regular meetings as well as a workshop, presenting findings at conferences, and acquiring the equipment to implement the respiratory tracking using the Respiratory Inductance Plethysmography (RIP) method. Early progress and results are expected to be presented at the following conferences: British Association of Academic Phoneticians (BAAP 2026, University of Warwick), Technology use and social interaction: New interactive practices, new data and methods (AGF 2026, Mannheim), the International Pragmatics Conference (IPC 2027, Helsinki), and the International Congress of Phonetic Sciences (ICPhS 2027, Victoria BC, Canada) and as part of a workshop later in the project. We will also publish our results and methodological reflections in journals on phonetics and social interaction. Data and resources from the project will be made openly available at the Bavarian Archive for Speech Signals (BAS).

The project will enable conversation analysts, phoneticians, and the public, to gain a better understanding of our visible and audible breathing behaviours, their timing, and their affordances for the organisation and coordination of social action.